Disinformation Code

An industry-led initiative

About the code

A voluntary industry initiative to tackle online disinformation and misinformation through transparency, accountability, and collaboration.

Signatories

Industry signatories commit to safeguards reducing online disinformation and harmful misinformation.

Transparency

Make a Complaint

WHAT IS THE AUSTRALIAN CODE OF PRACTICE ON DISINFORMATION AND MISINFORMATION?

The Australian Code of Practice on Disinformation and Misinformation

The Australian Code of practice on Disinformation and Misinformation is a commitment from a diverse set of technology companies to implement safeguards that reduce the spread and visibility of mis- and disinformation online.

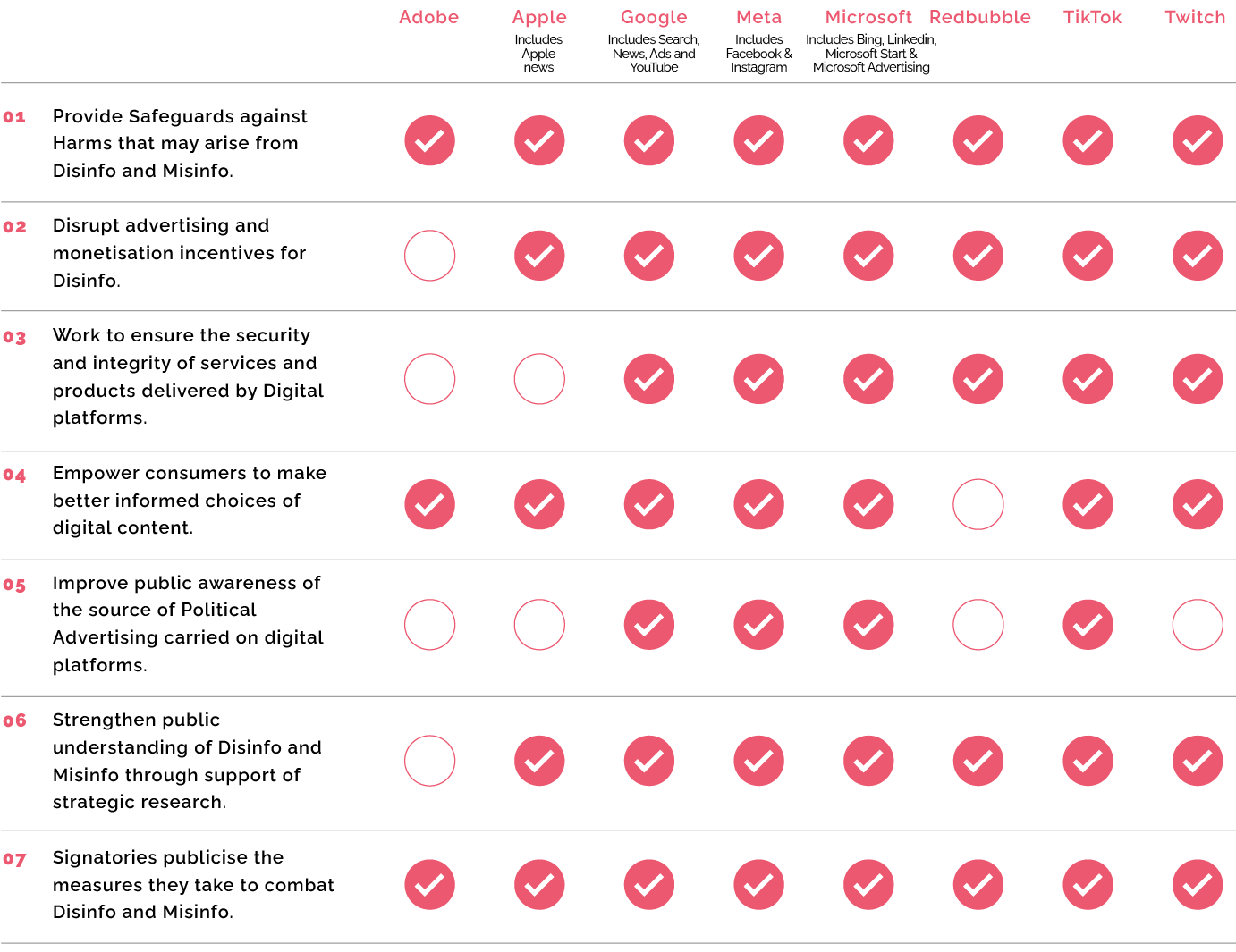

Currently Adobe, Apple, Facebook, Google, Microsoft, Redbubble, TikTok and Twitch have adopted the Australian Code of Practice on Disinformation and Misinformation.

Signatories commit to implementing a range of scalable measures that reduce the risk of online disinformation and misinformation causing harm to Australians. They release annual transparency reports on their efforts, helping to build a clearer understanding of how online disinformation and misinformation are being addressed in Australia.

For more information, watch this short video from DIGI’s Managing Director, Sunita Bose.

SIGNATORIES

The Australian Code of Practice on Misinformation and Disinformation.

DOWNLOAD THE CODE

JULY 2024

Download the code

This is the latest version of The Australian Code of Practice on Disinformation and Misinformation, launched on 26 July 2024.

CODE DEVELOPMENT

DECEMBER 2022

2022 Submission Report

This report explains the outcome of the 2022 code review, explaining code changes and how stakeholder feedback was addressed.

JUNE 2022

2022 Annual Report

This report provides research about Australians’ perceptions of misinformation. It contains information about how the code has evolved since it was initially launched.

JUNE 2022

2022 Review Discussion Paper

This discussion paper provides background and specific questions and proposals to assist public consultation on the code review. It takes into account the ACMA’s report to the previous Government that was released in March 2022.

FEBRUARY 2021

2021 Submission report

DIGI conducted public consultation on a draft code in October 2020 and closely reviewed all public submissions to inform changes to the first version published in February 2021.

2025 TRANSPARENCY REPORTS

The fifth set of reports were published in May 2025.

They cover data from the January 2024 – December 2024 calendar year. The reports have been reviewed by an independent expert Shaun Davies.

SIGNATORIES COMMITMENTS

For a more detailed breakdown of the outcomes under each objective that signatories have adopted, view the opt-in disclosures provided in 2021. The most recent information about signatories’ activities relating to each of their commitments, and any changes to those commitments, can be found in the most recent transparency reports.

FREQUENTLY ASKED QUESTIONS

What is the difference between misinformation and disinformation?

What kinds of commitments are signatories making under the code?

Why is this a voluntary code, not mandatory?

MORE INFO

Lodge a complaint

To lodge a complaint under the code, please use the complaint form here. DIGI only accepts complaints from the Australian public where they believe a signatory has materially breached the code’s commitments. DIGI cannot accept complaints about individual items of content on signatories’ products or services, and ask that these be directed to the signatory via their reporting mechanisms or otherwise.

Get in touch

The code is open to any company in the digital industry as a blueprint for best practice for how to combat mis and disinformation online. If you are interested in adopting the code, please contact us at hello@digi.org.au.